- Pilot workplace changes with 50-200 people for 8-12 weeks before committing

- Define a specific hypothesis and success metrics before you start anything

- Collect both utilization data and employee feedback weekly

- End every pilot with a clear decision: scale, iterate, or kill

- Communicate the outcome transparently, even if the answer is "no"

A workplace pilot program is how you test a workplace change on a small group before rolling it out company-wide. It's the difference between a calculated bet and a coin flip. Whether you're shifting to a hybrid schedule, redesigning a floor, or consolidating offices, piloting lets you learn what works (and what doesn't) without putting your entire workforce through an experiment they didn't sign up for.

Why do a workplace pilot program?

Most workplace changes fail not because the idea was bad, but because the rollout was. A new seating arrangement, a return-to-office policy, a shift to hot desking; these are all reasonable strategies that can blow up when you skip the testing phase and go straight to mandate.

The data backs this up. U.S. employee engagement hit 31% in 2025, down from a peak of 36% in 2020. That's a workforce already skeptical of top-down decisions. Layering on a major workplace change without employee input is a recipe for resistance, not adoption.

Meanwhile, global office utilization reached 53% in 2026, which means nearly half of office space still sits empty on any given day. Companies are spending real money on space that isn't working. The instinct is to do something big: consolidate, redesign, mandate three days in-office. But big moves carry big risk.

Pilots give you a controlled environment to test assumptions. They surface problems you didn't anticipate. And they turn skeptics into ambassadors, because people who participate in designing a change are far more likely to support it. As MIT Sloan's research on iterative work design puts it, organizations can seed transformation by collectively uncovering "everyday disconnects" between how we expect work to happen and how it actually does.

Here's the six-step framework.

Step 1: Define your hypothesis

Every good pilot starts with a specific, testable statement. Not "let's try hybrid" but "we believe that requiring the sales team to be in-office Tuesday through Thursday will improve cross-team collaboration without hurting individual productivity."

This matters because a vague pilot produces vague results. If you don't know what you're testing, you won't know what you've learned.

Write your hypothesis using this structure:

- We believe that [specific change]

- For [specific group]

- Will result in [measurable outcome]

- As measured by [specific metric]

For example: "We believe that switching Floor 3 to neighborhood seating will increase cross-functional collaboration, as measured by a 15% rise in meeting room bookings between departments and a positive shift in collaboration satisfaction scores."

Tie the hypothesis to a business outcome your leadership team actually cares about. Retention, productivity, real estate cost, employee satisfaction. If you can't connect the pilot to something on the executive agenda, it'll be hard to get budget and harder to get attention when results come in. If you're exploring corporate real estate strategy, the hypothesis should map directly to portfolio decisions.

One hypothesis per pilot. If you're testing three things at once, you won't know which one caused the results.

Step 2: Select your pilot team and location

Pick a group of 50 to 200 people on one floor or in one building. That's large enough to produce meaningful data and small enough to manage without a full program office.

The temptation is to pick your most enthusiastic team, the one that's already excited about the change. Don't. A pilot stacked with early adopters will produce artificially positive results that fall apart at scale. You need a representative sample.

Here's what "representative" means in practice:

- Mix of roles: Include individual contributors, managers, and at least one senior leader

- Mix of attitudes: Recruit some skeptics alongside supporters

- Mix of work styles: Include people who collaborate heavily and people who do deep focus work

- Realistic conditions: The pilot space and tools should mirror what you'd actually roll out

A good rule of thumb is 10-20% of the total affected population. If you're planning a change for 500 people, pilot with 50-100. For a company-wide shift, pick one office that's demographically similar to the rest.

If you're testing a hot desking policy, don't pilot it on a floor where everyone already shares desks. Test it where people currently have assigned seats. That's where the friction will be, and friction is what you need to find.

Document why you chose this group. When you present results later, leadership will ask whether the findings are generalizable. Having a clear selection rationale makes that conversation easier.

Pilots are one piece of a larger change management strategy. This step-by-step playbook covers the full framework, from stakeholder alignment to post-launch communication.

Read the guide

Step 3: Set success metrics before you launch

This is where most pilots go wrong. Teams run the experiment, collect a bunch of data, and then argue about what "success" looks like after the fact. Set your metrics upfront, before anyone moves a desk.

You need three categories of metrics:

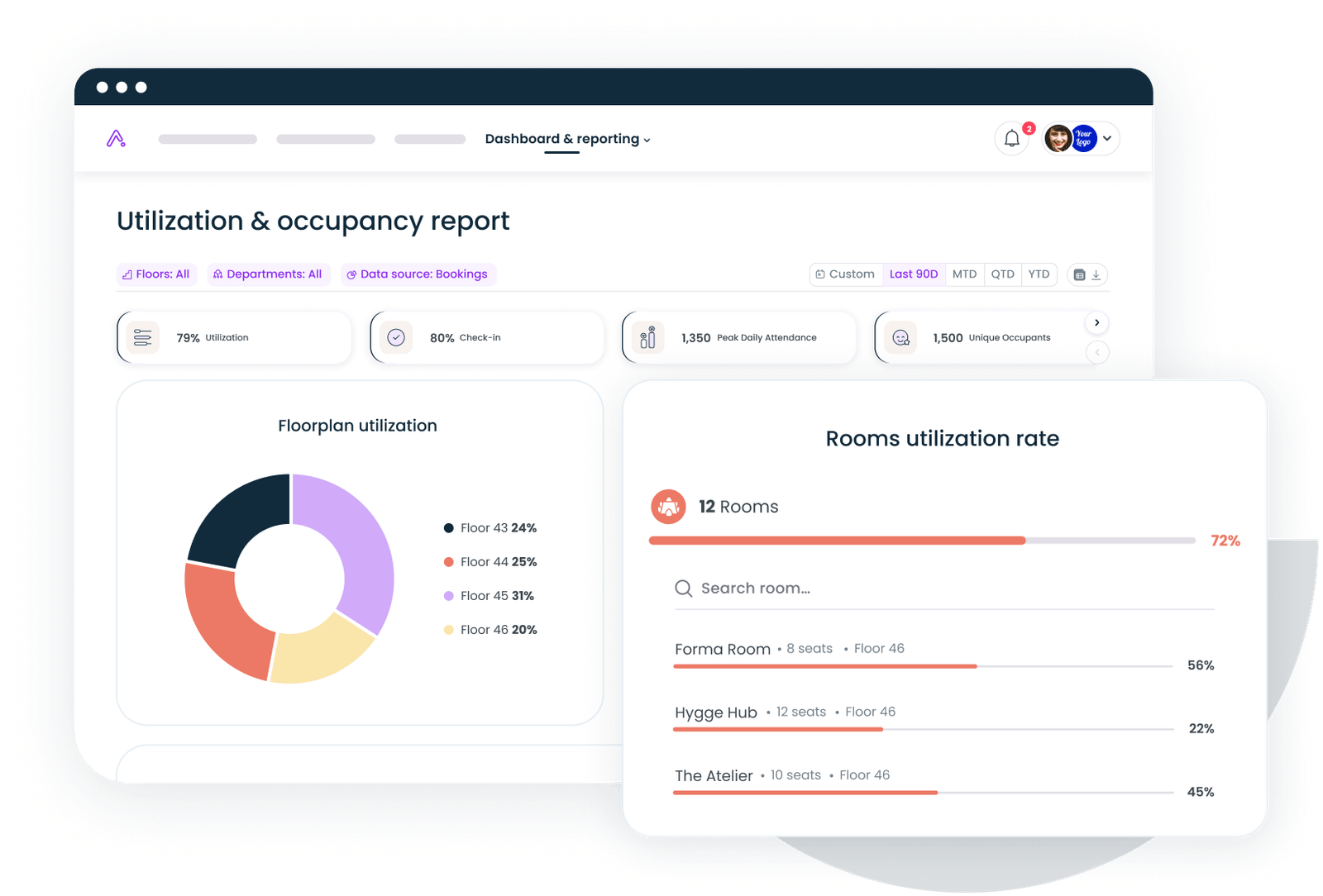

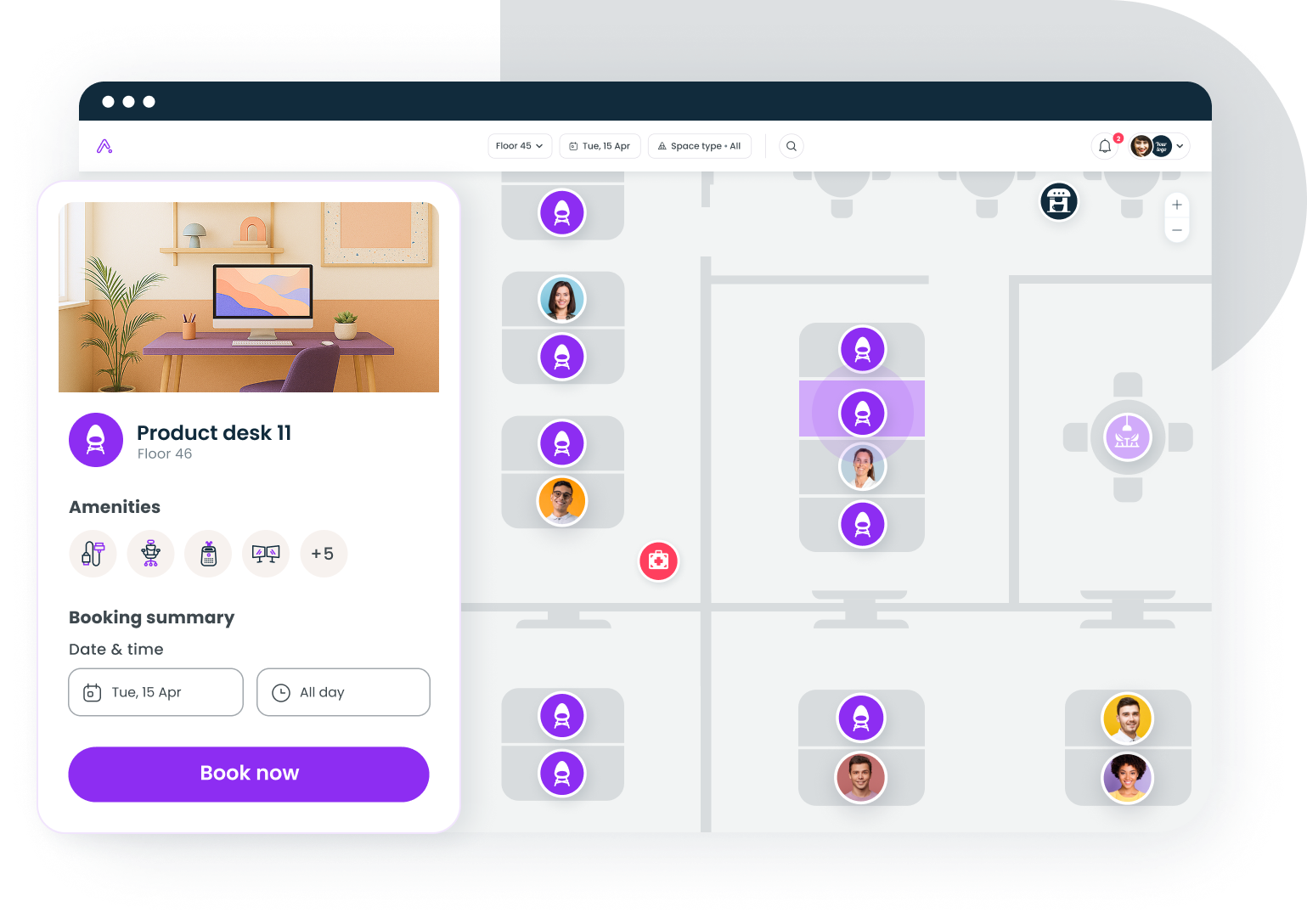

Utilization metrics tell you whether people are actually using the space as intended. Track desk occupancy rates, meeting room bookings, peak vs. off-peak usage, and no-show rates. If you're testing a three-day hybrid schedule, you need to know whether people are actually showing up on the designated days. Understanding space utilization metrics is foundational here.

Satisfaction metrics tell you how people feel about the change. Run a short pulse survey weekly or biweekly. Include questions about collaboration quality, focus time, commute burden, and overall workspace satisfaction. Use a consistent scale so you can track trends. The right engagement survey questions make the difference between useful signal and noise.

Business metrics tell you whether the change is moving the needle on outcomes that matter. Depending on your hypothesis, this could be retention rates, project velocity, sales pipeline activity, or real estate cost per person.

Establish baselines for all three categories before the pilot starts. You can't measure improvement if you don't know where you started.

Set specific thresholds for each metric. "Desk utilization above 65%" is a success criterion. "People seem to like it" is not.

Step 4: Run for 8-12 weeks with weekly check-ins

Eight weeks is the minimum for a workplace pilot. Anything shorter and you're measuring the novelty effect, not the actual change. Twelve weeks gives you enough time for people to settle into new habits and for seasonal variation to show up.

Here's the weekly rhythm:

Week 1-2: Orientation period. People are adjusting. Expect confusion, complaints, and lower-than-expected adoption. This is normal. Don't panic and don't make changes yet. Focus on answering questions and removing logistical blockers.

Week 3-6: Stabilization. Habits start forming. This is when your data gets meaningful. Run your first pulse survey at the end of week 3. Compare utilization data week-over-week to spot trends.

Week 7-10: Steady state. If the change is going to work, you'll see it here. Utilization patterns stabilize. Satisfaction scores plateau. This is your most reliable data window.

Week 11-12: Wrap-up. Run your final survey. Conduct focus groups or one-on-one interviews with 10-15 participants. Collect qualitative stories that bring the numbers to life.

Hold a 30-minute check-in every week with your pilot team leads. Review the data, flag issues, and decide whether any adjustments are needed. Keep a running log of every change you make during the pilot; this becomes critical context when you analyze results.

One important rule: don't change the core hypothesis mid-pilot. If you realize halfway through that you're testing the wrong thing, note it and finish the pilot anyway. You'll still learn something. Starting over resets the clock and burns credibility with participants.

Getting employee buy-in during this phase isn't optional. Participants who feel heard will give you honest feedback. Participants who feel like test subjects will give you nothing useful.

Step 5: Measure outcomes and decide

The pilot is over. Now comes the part that separates rigorous workplace teams from ones that just go through the motions.

Pull all your data into a single view: utilization numbers, survey results, business metrics, qualitative feedback from interviews. Compare everything against your pre-pilot baselines and the success thresholds you set in Step 3.

You have three options:

Scale. The data clearly supports the hypothesis. Utilization met or exceeded targets. Satisfaction improved or held steady. Business metrics moved in the right direction. You're confident the results will generalize. Build a rollout plan.

Iterate. The results are mixed. Some metrics hit the mark; others didn't. Qualitative feedback suggests the concept is sound but the execution needs work. Design a second pilot that addresses the specific issues. Maybe you need better signage, different technology, or a modified schedule.

Kill. The data says no. Utilization was low, satisfaction dropped, or the business case didn't materialize. This isn't a failure. It's a $20K lesson instead of a $2M mistake. Document what you learned and move on.

The hardest part of this step is intellectual honesty. If you championed the change, it's tempting to cherry-pick the data that supports it. Don't. Present the full picture, including the parts that are inconvenient.

Gable's workplace analytics platform can make this step significantly easier by automatically tracking utilization, attendance, and booking patterns throughout the pilot, so you're working with real data instead of manually assembled spreadsheets.

For a deeper look at what to measure and how to frame the business case, workplace ROI metrics is a good starting point.

Gable's analytics turn raw occupancy and booking data into clear dashboards and AI-powered insights, so you can measure pilot outcomes without the manual work.

Learn more

Step 6: Communicate the decision and the why

This is where most workplace teams drop the ball. They run a great pilot, make a sound decision, and then announce it in a two-paragraph email that leaves everyone guessing.

Communication after a pilot needs to be thorough, transparent, and fast. Here's the sequence:

First, tell the pilot participants. They gave you their time and honest feedback. They deserve to hear the outcome before anyone else. Share the key findings, the decision, and how their input shaped it. Do this in person or on a video call, not in an email.

Second, tell the broader organization. Explain what you tested, what you learned, and what happens next. Be specific. "We found that desk utilization on the pilot floor averaged 72% on anchor days, up from 45% before the pilot. Employee satisfaction with collaboration improved by 18 points. Based on these results, we're rolling out the new schedule to all three floors starting in Q3."

Third, if you're scaling, outline the timeline. People want to know when the change is coming and what it means for them. Give dates, not vague promises.

Fourth, if you're killing the idea, say so directly. "We tested X. The data showed Y. We're not moving forward, and here's what we're exploring instead." Honesty builds trust. Silence breeds conspiracy theories. For guidance on getting this right, communicating office policy changes covers the nuances.

The pilot participants who went through a successful change management process become your best ambassadors. They can describe their own experience to colleagues, answer questions, and help smooth the transition. That's worth more than any executive memo.

Common mistakes that sink workplace pilots

Even well-intentioned pilots fail for predictable reasons. Here are the ones I see most often:

Testing too many variables at once. You changed the seating layout, introduced hot desking, and shifted to a three-day schedule simultaneously. When results come in, you have no idea which change caused what. One variable per pilot.

Picking the wrong duration. Two-week pilots measure excitement, not behavior change. Globally, 93% of companies plan to run pilots, but many run them too short to produce actionable data. Commit to at least eight weeks.

Ignoring the control group. If you can, keep a comparable team on the old setup during the pilot period. Without a control, you can't distinguish pilot effects from seasonal trends or company-wide changes.

Skipping the baseline. You can't prove improvement without a starting point. Collect at least four weeks of pre-pilot data on every metric you plan to track.

Declaring victory too early. Week 3 looks great, so you announce the rollout. Then week 6 data shows the novelty wore off. Let the full pilot run before making decisions.

Making the case to leadership

If you need executive buy-in to run a pilot, frame it as risk mitigation, not experimentation. Executives don't want to hear "let's try something." They want to hear "here's how we avoid a costly mistake."

The math is straightforward. A workplace pilot for 100 people on one floor might cost $15K-$50K in setup, technology, and staff time. A failed office redesign or botched RTO mandate can cost millions in lease commitments, turnover, and lost productivity. The pilot is insurance.

71% of Fortune 100 firms remain flexible, which means most large companies are still figuring out what their workplace should look like. A pilot gives you data-backed answers instead of opinions. That's a much easier conversation to have with a CFO.

If you're building the broader business case for workplace technology to support the pilot, this framework walks through the financial justification step by step.

The bottom line on workplace pilot programs

Workplace changes are too expensive and too consequential to get wrong. A pilot doesn't guarantee success, but it dramatically reduces the cost of failure. It gives you data instead of assumptions, ambassadors instead of resisters, and confidence instead of hope.

The framework is simple: hypothesis, team, metrics, run, measure, communicate. The discipline is in actually following it, especially the parts where the data tells you something you didn't want to hear.

From desk booking to real-time analytics, Gable gives you the infrastructure to run workplace pilots with real data, not guesswork.

Get a demo

.svg)

.svg)