- 95% of companies use AI, but only 16% of employees find their tools useful

- The real barrier is organizational clarity and training, not the technology itself

- Shadow AI is already in your org; govern it or lose control of it

- Measure adoption like a product: cohorts, frequency, business outcomes

- AI handles logistics; your job is bringing people together for what AI can't do

Ninety-five percent of companies now use AI in some capacity. Fewer than four in ten have formally integrated it into workflows. That gap, the distance between "we bought licenses" and "this changes how we work," is where workplace AI adoption either creates competitive advantage or becomes an expensive line item nobody can justify. This guide breaks down where adoption stands in 2026, why scaling it is harder than buying it, and what workplace leaders can do about both.

What workplace AI adoption actually means (and what it doesn't)

Downloading ChatGPT on your phone isn't adoption. Neither is a CEO announcing an "AI-first culture" during an all-hands meeting. Workplace AI adoption is the sustained, measurable integration of AI tools into how employees complete real work: summarizing meeting notes, generating first drafts of reports, analyzing space utilization patterns, or automating repetitive scheduling tasks.

The distinction between organizational adoption and individual adoption matters more than most leaders realize. A company can check the "we use AI" box because 12 people in engineering are using Copilot. That's individual adoption. Organizational adoption means structured rollout, training programs, governance policies, and clear measurement of whether the tools are changing outcomes.

Generative AI versus traditional AI

Traditional AI has lived inside workplace tools for years. Your spam filter uses it. So does your HRIS when it flags anomalies in headcount data. Generative AI, the kind driving the current wave of adoption anxiety and excitement, creates new content: text, images, code, analysis. The workplace implications are fundamentally different because generative AI touches knowledge work directly, the kind of work that 60% of the US labor force does every day.

Three numbers frame why this matters right now. Microsoft's 2025 Work Trend Index found that 79% of leaders agree their company needs AI to stay competitive. The World Economic Forum reported that AI adoption jumped 55% in a single year. And Gallup's Q4 2025 survey revealed that only 16% of employees strongly agree the AI tools their organization provides are useful for their work. The gap between leadership enthusiasm and employee utility is the central challenge of workplace AI adoption in 2026.

Where AI adoption stands right now

The headline numbers look impressive until you read the fine print. ActivTrak's 2026 State of the Workplace Report puts overall adoption above 95% across companies of all sizes. The St. Louis Federal Reserve, using a tighter definition, measured generative AI work adoption growing from 33.3% to 37.4% in twelve months. That's growth, but it's not the revolution the vendor pitches promised.

The frequency story is more interesting than the adoption story

Daily AI use among technology workers hit 31% in late 2025, according to Gallup. Among all US workers, the number is far lower. What's shifting isn't how many people have tried AI; it's how often the existing users rely on it. Frequent use (weekly or more) is climbing steadily, while overall "have you ever used it" adoption has plateaued at roughly a 1% quarterly gain.

This pattern mirrors what happened with workplace collaboration tools in 2020 and 2021. Adoption surged, then flattened. The companies that pulled ahead invested in moving from "everyone has a license" to "everyone knows when and why to use it."

Adoption by role and industry

Technology workers lead at 77% adoption. Financial services and professional services cluster around 55% to 60%. Retail, manufacturing, and healthcare lag below 30%, partly because frontline roles don't sit in front of laptops all day.

The role-based split is equally stark. Leaders and managers are roughly twice as likely to use AI weekly compared to individual contributors. That creates a dangerous dynamic: the people making decisions about AI rollout are the ones whose experience least represents the average employee's reality.

AI adoption sits inside your broader workplace strategy, alongside space planning, hybrid policies, and collaboration design. This guide walks through how to connect all the pieces.

Read the strategy guide

Why organizations struggle with AI adoption

"Unclear business value" is the number one barrier to AI adoption, according to Gallup's research. Not cost. Not technical complexity. Leaders can't articulate what specific problem AI solves for specific roles. That vagueness trickles down. When employees hear "use AI to be more productive" without concrete examples tied to their workflows, they nod politely and keep doing things the old way.

The training gap is enormous

McKinsey's "Superagency in the Workplace" report found that nearly half of employees want more formal AI training and believe it's the best path to adoption. Twenty-two percent report receiving no training at all. Compare that to the fact that 60% of leaders admit they lack a clear AI adoption plan, and a picture emerges: companies are expecting employees to figure it out on their own while leadership figures out the strategy.

A 200-person marketing department that receives a company-wide Copilot license with a 30-minute webinar isn't being set up for adoption. It's being set up for a 9% engagement rate at month three, which is exactly what most enterprise software rollouts produce without structured enablement.

Shadow AI is already inside your organization

Microsoft's research shows that 78% of AI users bring their own tools to work. They're pasting customer data into free-tier ChatGPT prompts. They're uploading proprietary documents to tools the security team has never vetted. They're doing this not out of malice but because the tools their company provides either don't exist, aren't useful (remember that 16% satisfaction number), or require too many approvals to access.

Shadow AI is the 2026 version of shadow IT, except the data governance implications are worse. When an employee uploads a Q3 financial forecast to a consumer AI tool, the data enters a training pipeline the company can't audit, can't retract from, and probably doesn't even know about.

The fear factor is real but misunderstood

Only about 15% of employees fear AI will eliminate their job entirely. The more common anxiety is subtler: fear of looking incompetent while learning a new tool, fear of being the person who "doesn't get it," fear that AI adoption is a precursor to headcount reduction dressed up as innovation. That last fear isn't irrational. When a company announces AI productivity gains and layoffs in the same quarter, employees connect the dots regardless of whether leadership intended a link.

Key barriers to scaling beyond the pilot phase

Getting 50 enthusiastic early adopters to use an AI tool is easy. Getting 5,000 employees across 14 departments to change their daily workflows is a different problem entirely.

Leadership says "adopt AI" but hasn't defined what that means

Sixty percent of leaders lack a clear AI adoption plan. That statistic from Microsoft deserves more unpacking than it usually gets. "Lack a clear plan" doesn't mean they've done nothing. It means they've done disconnected things: bought licenses, hosted a hackathon, formed a task force that meets monthly, published an AI policy nobody's read. What they haven't done is define which workflows change, by when, with what training, measured by what metrics.

A workplace team at a 1,200-person company that can't answer "what does successful AI adoption look like for our facilities coordinators specifically" will produce adoption numbers that mirror the industry average: lots of sign-ups, very little sustained use.

The ROI measurement problem

Fifty-nine percent of leaders worry about quantifying AI productivity gains, per Microsoft. This worry is justified. If your primary metric is "time saved," you need baseline data on how long tasks took before AI, which most organizations never collected. If your metric is "output quality," you need a rubric that didn't exist before AI-generated content became the norm.

The companies making progress on measurement are treating adoption like a product launch, not an IT deployment. They track weekly active users by department. They measure frequency distributions, not averages. They survey for employee engagement changes tied to specific AI-assisted workflows. They compare cohorts: teams that received structured training versus teams that got a license and a wiki link.

Generational and role-based disparities create equity concerns

Gen Z employees adopt AI tools at roughly 1.5x the rate of workers over 50. That's not a training issue alone; it's a collaboration issue. When half a team uses AI to prepare for meetings and the other half doesn't, information asymmetry grows. Younger workers end up informally mentoring older colleagues, a reversal that can create interpersonal tension if not managed deliberately.

The white-collar versus frontline divide is even sharper. A corporate headquarters running on AI-augmented workflows while warehouse or retail teams see zero investment creates a two-tier employee experience that undermines culture and retention.

Gable's AI-powered insights turn complex workplace data into clear, actionable reports, so you can identify adoption gaps, track utilization, and build strategies grounded in real numbers instead of assumptions.

Learn more

Best practices for successful workplace AI adoption

Start with workflows, not tools

The most common adoption mistake is tool-first thinking. "We're rolling out Copilot" tells employees nothing about what to do differently on Monday morning. Workflow-first adoption starts by identifying the three to five highest-friction tasks in a department (scheduling cross-timezone meetings, writing status reports, summarizing customer feedback, or analyzing workplace analytics data) and then mapping AI capabilities to those specific pain points.

A facilities team spending four hours weekly compiling occupancy reports from badge data, WiFi logs, and manual headcounts has a clear, measurable use case for AI. "Here's how to get that report in 20 minutes" is an adoption pitch that works. "AI will change how you work" doesn't land because it's too abstract to act on.

Build a phased rollout, not a big bang

Big bang rollouts produce a spike in week one and a crater by week eight. Phased rollouts let you catch problems early, like the discovery that your approval workflow adds three clicks to every AI interaction, which is enough friction to kill adoption in departments that process 40+ requests daily.Make managers the adoption engine

Managers influence adoption more than executive memos or training programs do, according to internal pilot data from multiple enterprise rollouts that show a 2x to 3x difference in team-level adoption rates depending on manager behavior. When a manager says "let's use AI to prep for this client meeting" in a team standup, that's permission and demonstration rolled into one moment. When a manager never mentions AI, employees read that silence as indifference or disapproval.

The coaching model matters. Managers who police ("why aren't you using Copilot?") create resistance. Managers who model ("here's how I used it to cut my board prep from three hours to forty minutes") create curiosity. The distinction sounds soft, but the adoption data backs it up.

Harness the Gen Z mentorship effect

Something counterintuitive is happening in organizations where AI adoption is working: younger employees are mentoring senior colleagues on AI workflows. This isn't a formal program in most cases. It's organic. A 24-year-old analyst shows a 45-year-old VP how to write better prompts, and suddenly the VP's team meetings are 30% shorter because prep work got automated.

Smart workplace leaders formalize this. Pair junior AI-fluent employees with senior domain experts. Both sides benefit. The junior employee gains visibility and relationship capital. The senior employee gains capability without the stigma of attending a "basics" training session.

Create safety for experimentation

No one wants to be the person who feeds confidential data into a public AI model, gets flagged by security, and becomes the cautionary tale in next quarter's compliance training. Clear guidelines on what data can and can't be used with which tools remove the paralysis. "Everything is fine" is as harmful as "nothing is allowed," because both leave employees guessing.

Publish a one-page decision tree: public data goes here, internal data goes there, confidential data never leaves these approved systems. Update it quarterly. Make it findable in under 10 seconds from any company communication tool.

How to measure AI adoption and impact

Adoption metrics versus business metrics

Counting licenses issued tells you about procurement. Counting weekly active users tells you about adoption. Counting hours saved per user per week tells you about impact. These are three different questions, and most organizations only track the first one.

Build a maturity model specific to your organization

"Good" isn't 100% adoption. Good is the right people using the right AI tools for the right tasks, measured against outcomes that matter to the business. A legal team with 40% adoption but a 60% reduction in contract review time is outperforming a marketing team at 90% adoption that's producing the same output as before, with nothing to show for the investment.

The maturity spectrum for most organizations looks like this:

1. Experimental: Scattered individual use, no governance, no measurement. This is where roughly 55% of companies sit today despite claiming "AI adoption." 2. Structured: Defined use cases, training programs, basic usage tracking. About 30% of companies. 3. Integrated: AI embedded in standard operating procedures, manager-led adoption, business outcome measurement. Maybe 12% of companies. 4. Optimizing: Continuous refinement based on data, AI informing strategy (not executing tasks), cross-functional adoption parity. Under 5%.

Most companies claiming Stage 3 are sitting at Stage 1 with better PR.

Link adoption data to workplace decisions

When a facilities team sees that AI-assisted meeting scheduling has reduced conference room booking conflicts by 35% on floors where adoption is high, that's a data point that justifies both continued AI investment and a reconfiguration of meeting spaces on floors where adoption lags. The insight isn't "AI is good." The insight is "Floor 7 needs training, and Floor 3 might need fewer 8-person rooms and more focus pods."

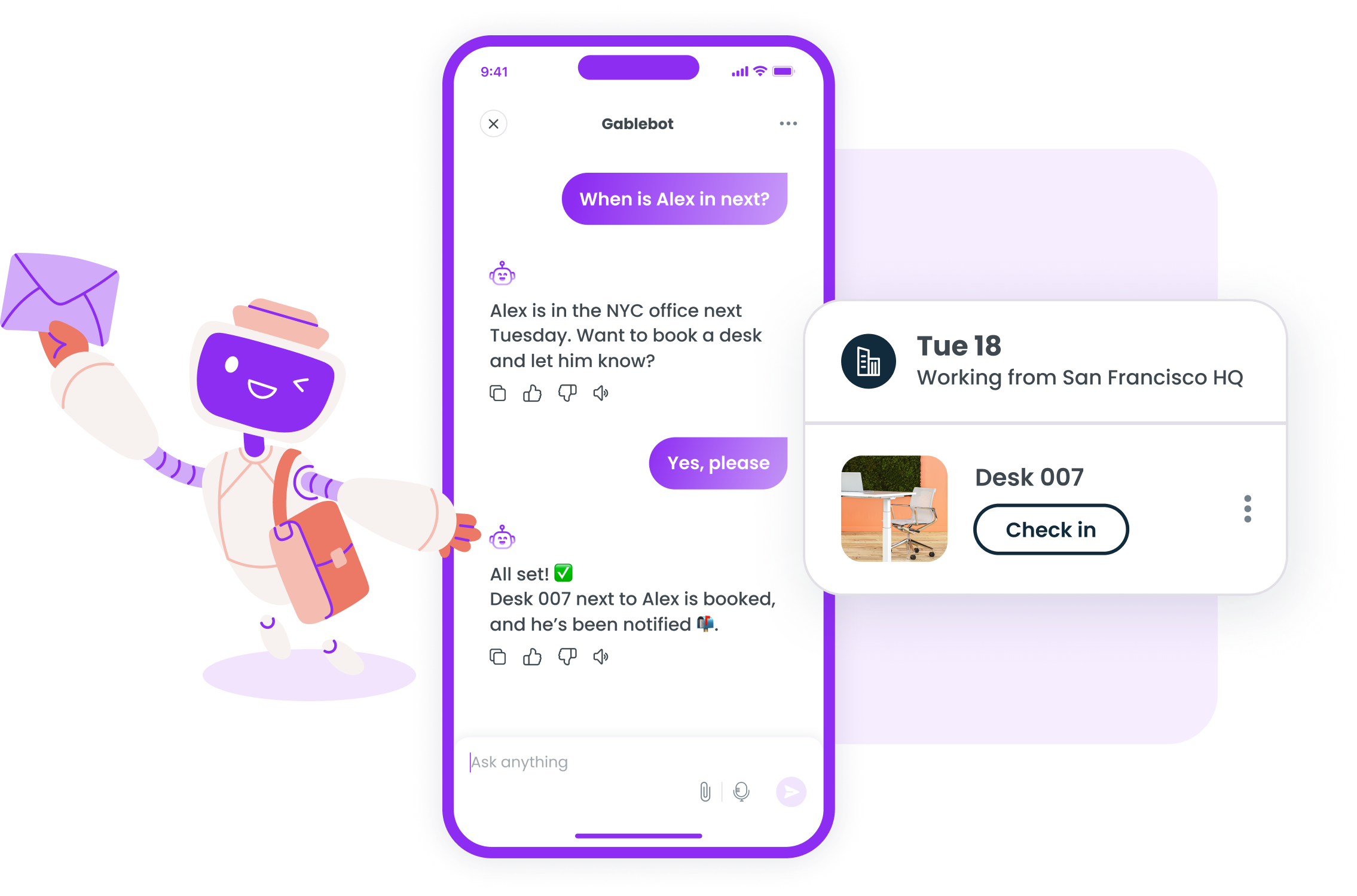

This is where workplace analytics and AI adoption measurement converge. Platforms like Gable can combine usage data from your workplace technology stack with space utilization data and collaboration patterns, creating a picture that neither dataset provides alone.

The future of workplace AI: what's coming in 2026 and 2027

Agentic AI changes the adoption calculus

The current wave of AI adoption is about individual productivity: one person, one prompt, one output. Agentic AI, where AI systems execute multi-step workflows autonomously, shifts the conversation from "can you use this tool?" to "can you supervise this system?" That's a fundamentally different skill set.

A workplace operations team that currently uses AI to draft event communications will soon have AI agents that find venues, send invitations, track RSVPs, adjust catering orders based on response rates, and generate post-event summaries, all without a human touching each step. The human role becomes quality control, exception handling, and strategic judgment. Training programs built for the current paradigm won't survive this shift.

AI-augmented collaboration is the real prize

Research from the World Economic Forum surfaced a finding that should concern every workplace leader: early AI adopters report weaker connections to co-workers and lower perceived productivity. That's not an argument against AI. It's evidence that AI adoption without intentional collaboration design isolates people.

The organizations getting this right are using AI to handle the logistics of collaboration (scheduling, space booking, agenda preparation, follow-up tracking) while investing more deliberately in the human elements: in-person gatherings, team events, and structured time for the kind of unstructured conversation that builds trust. AI handles the rote work. Humans do what AI can't: build relationships, navigate ambiguity, and make judgment calls that require empathy.

Governance isn't optional anymore

The shadow AI problem will get worse before it gets better. As AI tools become more capable and more accessible, the gap between what employees can do and what organizations have sanctioned widens. Companies that don't establish clear governance frameworks in 2026 will spend 2027 cleaning up data exposure incidents, compliance violations, and the trust damage that comes with both.

Governance doesn't mean restriction. It means clarity: which tools are approved, what data is permissible, where outputs get reviewed, who's accountable when something goes wrong. Companies that treat governance as an enabler of adoption rather than a brake on it tend to see stronger sustained use over time.

Adoption is a human problem with a human solution

The technology works. That stopped being the question sometime in mid-2024. The question now is whether organizations can change how people work, not what tools they use. That requires clear strategy (which 60% of leaders still lack), structured training (which 22% of employees still haven't received), and honest measurement (which almost nobody is doing well).

Workplace AI adoption is a change management challenge that happens to involve technology. The leaders who treat it that way, who start with workflows instead of tools and measure business outcomes instead of license counts, are building the organizational muscle that matters. Getting people to try AI once isn't the hard part. The hard part is integrating it into daily work, measuring whether it's helping, and adjusting when it isn't. That takes visibility, data, and the willingness to iterate every single quarter.

Gable unifies your people, spaces, and workplace data so you can make decisions grounded in reality, not assumptions. See how leading companies use Gable to optimize their workplace strategy.

Get a demo

.svg)

.svg)